We spent 50 years training people for a world that no longer exists.

In the first two parts of this article, we looked at how schools were built on an industrial model to produce executors, and how we paradoxically made the very disciplines that teach critical thinking (like mathematics and philosophy) optional at the exact moment AI was rendering execution obsolete. Given this reality, it’s time to ask the only question that matters: how do we reinvent schools to preserve our relevance in tomorrow’s world?

The world actually needs people who can think for themselves

American anthropologist David Graeber, in his striking and deliberately provocative work on “Bullshit Jobs”, had already diagnosed with sharp precision that an enormous portion of our service economy was built on purely mechanical, repetitive, bureaucratic tasks requiring no genuine human judgment [10]. He described jobs filled with “box-checkers”, “plaster-smoothers” and “document-passers-along”, positions so pointless to society that the employees themselves secretly believed that if their role vanished overnight, it would make absolutely no difference to the running of the world. More importantly, he highlighted a crucial point: the people occupying these positions knew it perfectly well, which generated deep psychological suffering, a profound loss of meaning, and a corrosive cynicism.

Graeber wrote that book in 2018, long before the explosion of generative AI. Yet he had already seen what we refused to acknowledge: an entire economy had been built on tasks that could have been automated long ago, tasks that had been artificially kept alive for social and political reasons. These jobs didn’t exist because they created value, they existed because our social model was built on the premise that everyone needed a job, whatever it might be.

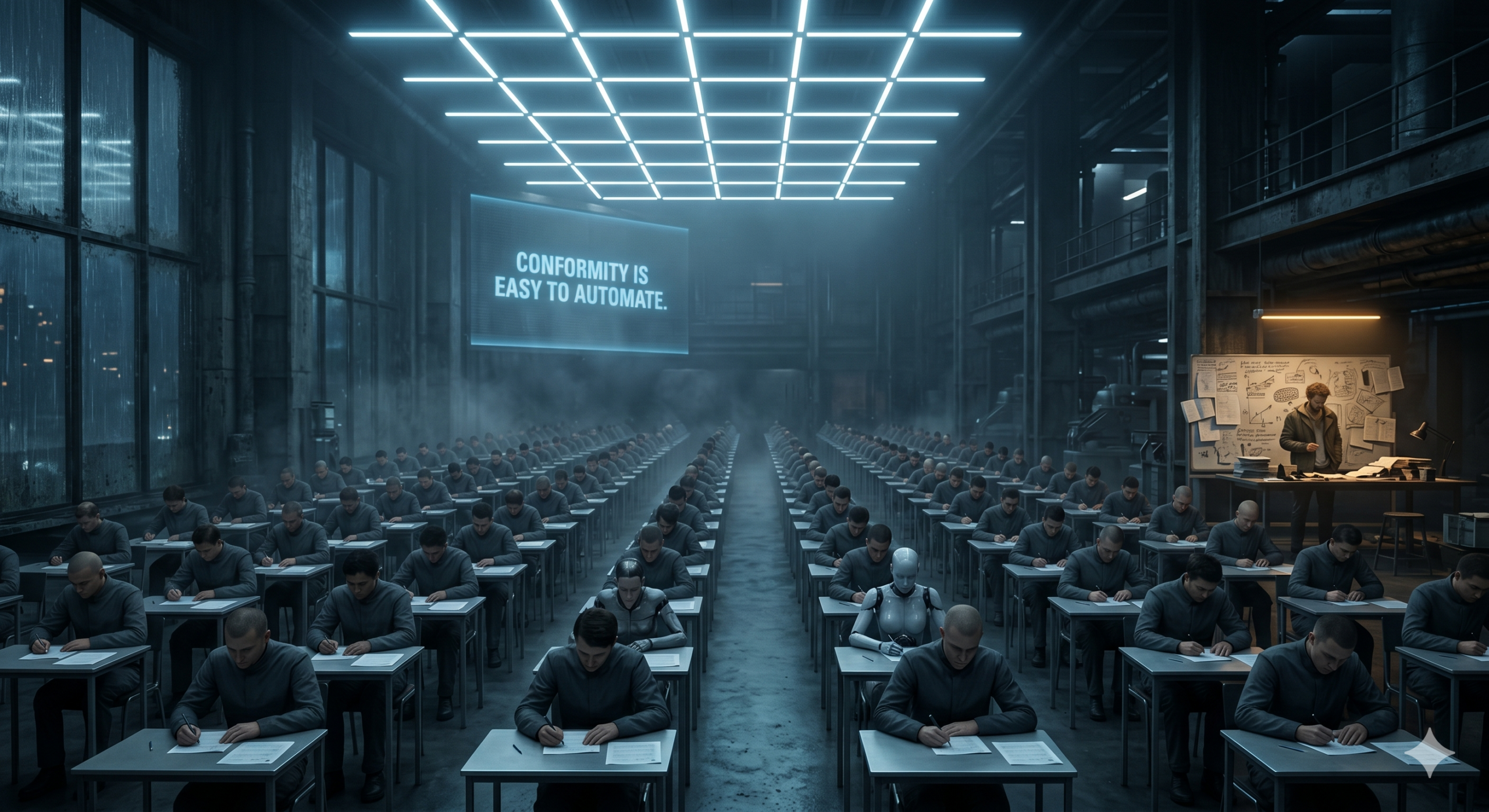

Where the philosopher Bernard Stiegler explained the inner workings of cognitive proletarianisation, David Graeber laid out its devastating social and economic consequences. The school, in its industrial form, was no accident within this broader system: it was its essential pipeline, the conveyor belt supplying those pointless jobs. Year after year, it turned out cohorts of compliant individuals, people capable of sitting in silent rows, following instructions without question, respecting rigid schedules, and regurgitating formatted information without ever challenging the substance of it.

These are precisely the routine tasks, the bureaucratic execution jobs, the “bullshit jobs”, that generative artificial intelligence is now vacuuming up first, with a chilling efficiency. A rigorous study from Stanford University, published in late 2025, revealed a figure that sent shockwaves through economic circles: employment among young graduates (those aged 22 to 25) in roles heavily exposed to AI has dropped by 13% since the end of 2022 [11]. Entry-level jobs, traditionally made up of repetitive cognitive tasks such as summarising lengthy documents, drafting basic reports, handling first-line customer support, or writing simple code, are the first to take the full force of this wave of automation.

Major investment banks and consulting firms confirm the trend. Goldman Sachs estimates in a widely discussed report that 300 million full-time jobs worldwide are directly exposed to automation by AI [12]. McKinsey goes even further in its projections, arguing that 57% of the working hours currently logged in developed countries are already automatable with existing technology, because AI is no longer limited to physical or manual tasks as industrial robots once were; it now automates complex cognitive functions [13].

These figures are staggering, and the temptation to catastrophise is real. But it takes intellectual courage to flip this unsettling reality on its head: what if, at bottom, this is an unexpected opportunity for humanity?

Artificial intelligence isn’t simply destroying jobs or threatening careers. It’s acting as a powerful revealer, a magnifying mirror that makes visible, and brutally so, what we should have been questioning long ago. It forces us to admit, with our backs against the wall, that we spent 50 years evaluating, grading and ranking memory and conformity, only to discover, with a sharp and cutting irony, that storage memory is the most easily automatable capacity of the human species.

Tomorrow’s world no longer needs carriers of intellectual cargo. It no longer needs human hard drives, walking automatic translators, or prodigiously fast calculators. It has a vital need for people who know where to direct thought, and why. It needs individuals capable of navigating radical uncertainty with composure, of demonstrating genuine empathy, truly disruptive creativity, and solid ethical judgment when confronting dilemmas nobody has faced before.

AI excels at processing vast amounts of information, it can ingest entire libraries in seconds, but it fails miserably at decoding the nuances of a complex human emotion, at catching an unspoken undercurrent in a negotiation, or at building an authentic, warm connection with an anxious patient. It can generate a thousand business ideas at the click of a button, but it cannot feel the passion and resilience needed to see a single one through in the face of adversity. It can brilliantly summarise a demanding philosophical treatise, but it cannot be deeply unsettled by it, it cannot lose sleep over it, or have its entire worldview shifted by it.

What schools should actually be teaching

The question is not “how do we protect schools from AI?” That question is already a losing one, you don’t protect an institution from reality, you transform it to meet that reality head on.

The real question is simpler, and far deeper: what will remain irreplaceably human in ten years’ time? And how can schools become the guardians of that?

John Dewey answered this question back in 1938, long before it had been framed in these terms [14]. For him, learning was never a matter of transmission, it was always a matter of experience. A student who works through a real problem, makes mistakes, adjusts, tries again, develops something no list of course content can give them: a genuine appetite for reasoning. Piaget put it differently, a child doesn’t receive knowledge, they construct it [15]. Error is not a fault to be corrected in red pen; it is a necessary, irreplaceable step in the building of an autonomous intelligence.

None of these ideas are new. They’ve been sitting in the drawers of pedagogy for decades. What is new is that AI has just made them urgent.

What does this look like in practice? Rather than asking a student to recite a definition, ask them why it’s incomplete. Rather than grading a word-for-word translation, assess their ability to bring a text to life within another culture. Rather than penalising mistakes, put them at the centre of the discussion, because understanding why you went wrong is infinitely more formative than copying out the correct answer. And learning to use AI critically: to identify its blind spots, to verify what it claims, not in order to distrust it, but to use it without surrendering to it.

This isn’t a pedagogical revolution waiting to be invented. It’s a political choice waiting to be made. Evaluating thought is harder than evaluating memory. It takes more time, demands more judgment, and resists automated grading algorithms. But that’s precisely why it’s worth something.

In a world where machines are learning to imitate intelligence, our last great responsibility, and perhaps our greatest privilege, is teaching humans to remain intelligently human.

So what now?

The choice is simple, even if it’s uncomfortable. Either we continue assessing our children on their ability to do what machines do better than them, and we condemn them to run a race they’ve already lost. Or we finally accept the need to ask what school is actually there for.

This won’t be easy. It requires political courage, and recent educational reforms haven’t always shown we have any. Changing an education system means touching something deeply personal. It means questioning decades of certainties: the competitive exams entire generations sat, the degrees families sacrificed years to obtain. Nobody wants to hear that the road they travelled was perhaps the wrong one.

But reality doesn’t wait. Companies doing the hiring say it more openly all the time: they’re no longer looking for graduates capable of reciting lectures. They’re looking for people who can think, who can doubt, who can adapt, who can hold a position when everyone else says the opposite. This gap between what schools produce and what the world demands is nothing new. AI has just made it impossible to ignore.

So here’s the question we should all be asking, whether we’re a parent, a teacher, a recruiter, or simply a citizen: did the system that trained us actually teach us to think, or only to obey? Were we taught to question, to defend an idea under pressure, to recognise when a line of reasoning is heading off a cliff? Or did we mostly learn to tick the right boxes, give the right answers, and not make too many waves?

Most of us already know the answer. And if we’re honest, that answer is a little uncomfortable.

Because the problem doesn’t stop with today’s students. It concerns the adults we’ve already become. How many of us earned a degree without ever really learning to argue with someone who pushes back? How many went through years of education without once being asked to defend an unpopular idea, to challenge something taken for granted, to create something that hadn’t existed before? How many of us learned to work alone on a blank page, but never to think collectively about a problem with no right answer?

Nobody in particular is to blame. The fault lies with a system designed for a vanished era, one that couldn’t, or wouldn’t, reinvent itself.

AI doesn’t replace humans who know how to think. It replaces those who were trained not to. That’s not a fatality. It’s a choice we make every day, in every classroom, in every exam, in every hiring decision. And as long as we refuse to see it, we’ll keep producing people who are remarkably well-equipped for a world that no longer exists.

The real question isn’t whether AI will replace us. The real question is what we actually put in our heads during all those years of schooling. And whether it was enough to face a world nobody has seen yet.

Références

For those who still take the time to verify, compare, and understand sources, these references remind us of a simple truth: information still exists. But in a near future, even this simple act may become a luxury, as AI-generated content multiplies and the real risk shifts from misinformation to the dilution of reality in an ocean of plausible narratives.

[10] Graeber, D. (2018). “Bullshit Jobs: A Theory”. Simon & Schuster, New York. Traduction française : “Bullshit Jobs” (2018), Les Liens qui Libèrent.

[11] Brynjolfsson, E., et al. (2025). “Canaries in the Coal Mine? Six Facts about the Recent Decline in Entry-Level Employment”. Stanford Digital Economy Lab. Disponible sur : https://digitaleconomy.stanford.edu/app/uploads/2025/11/CanariesintheCoalMine_Nov25.pdf

[12] Goldman Sachs (2023). “The Potentially Large Effects of Artificial Intelligence on Economic Growth”. Goldman Sachs Economic Research.

[13] McKinsey Global Institute (2023). “The economic potential of generative AI: The next productivity frontier”. Disponible sur : https://www.mckinsey.com/capabilities/mckinsey-digital/our-insights/the-economic-potential-of-generative-ai-the-next-productivity-frontier

[14] Dewey, J. (1938). “Experience and Education”. Kappa Delta Pi, New York.

[15] Piaget, J. (1970). “Science of Education and the Psychology of the Child”. Orion Press, New York.

[16] Prost, A. (1968). “Histoire de l’enseignement en France, 1800-1967”. Armand Colin, Paris.

[17] Taylor, F. W. (1911). “The Principles of Scientific Management”. Harper & Brothers, New York.