Training and emergence, or the birth of an intelligence (3/5)

In the previous episode, Cerise discovered that what she believed to be a thought was in fact only a clever prediction, carved out of probabilities. But to truly understand this magic, she must go deeper, into the very heart of the training process that gives Ada both its power and its personality.

Between 2018 and 2022, behind the closed doors of research laboratories, understanding the architecture of Transformers was only the first step in our journey into the heart of modern artificial intelligence. An architecture, however revolutionary it may be, remains nothing more than an empty shell without the process that brings it to life: training. This is perhaps where the deepest mystery of these systems lies, the moment when mathematical matrices become capable of composing poems, solving equations, and holding conversations of unsettling sophistication.

The great “feeding frenzy”

Training a Large Language Model begins with what researchers, with a hint of irony, call an “industrial-scale feeding frenzy of staggering proportions.” Imagine a digital creature endowed with an insatiable appetite for words, sentences, and texts of every kind and from every possible source.

This creature will literally devour a large portion of humanity’s written production: the entirety of Wikipedia in every language, millions of digitized books, terabytes of newspaper articles, discussion forums, conversations from social networks, computer code in every programming language, scientific papers, literary works, technical manuals.

This pre-training phase resembles the education of a prodigious child who would learn to speak not by listening to their parents, but by absorbing at once the entire body of world literature, every newspaper ever published, and every conversation ever recorded.

A form of learning whose intensity and scale surpass anything human evolution has ever produced.

But beware, the model does not memorize these texts in the way we memorize a poem or a song. Instead, it performs a far subtler and more powerful abstraction: it learns the underlying patterns, the hidden regularities, the invisible structures that govern human language. It is as if, by reading millions of musical scores, someone developed an intuitive understanding of harmony, rhythm, and melody, without remembering each composition note by note.

The learning process itself is disarmingly simple in concept. The model is given an incomplete sentence, for instance “The cat sleeps on the…”, and is asked to guess the next word. If it proposes “carpet,” and the correct continuation was indeed “carpet,” it receives a mathematical reward. If it proposes “refrigerator,” the mathematical penalty pushes it to adjust its internal parameters to perform better next time.

Repeat this operation trillions of times with trillions of different sentences, mobilize entire farms of processors for weeks, and something extraordinary begins to emerge from this industrial repetition: the model develops an intuitive understanding of language that goes far beyond merely predicting the next word. It grasps stylistic nuances, masters argumentative logic, absorbs factual knowledge, and develops sensitivity to cultural contexts.

When the machine learns humanity

Yet here lies the troubling paradox: a model trained only to predict the next word does not necessarily produce answers that are useful, honest, or benevolent. It faithfully reproduces what it has observed on the Internet, including the worst aspects of human behavior. The earliest prototypes of ChatGPT, before they were “civilized,” could express toxic views, spread conspiracy theories, and display discriminatory biases. They reflected the Internet in all its diversity, including its darkest corners.

This is where a decisive innovation enters the picture, one that made the emergence of systems like ChatGPT possible: RLHF, Reinforcement Learning from Human Feedback. This phase, more discreet but just as essential, consists in “civilizing” the model, teaching it values, norms, and desirable forms of behavior.

The process resembles the education of a brilliant but unpolished adolescent. The model is asked to generate answers to thousands of questions, and then humans, often underpaid workers in developing countries who play a crucial yet largely invisible role in this revolution, evaluate and rank these responses. “This answer is helpful and respectful.” “This one is factual but cold.” “This third one is creative but inappropriate.”

These human evaluations are then used to retrain the model according to a reinforcement logic. It works much like training a very intelligent animal: when it displays the desired behavior, it receives a reward that increases the likelihood of repeating it. When it produces something inappropriate, the mathematical penalty encourages it to avoid this type of response in the future.

This alignment phase transforms a raw text generator into a polite, useful, and generally benevolent conversational assistant. It is the reason ChatGPT apologizes when it makes a mistake, asks for clarification when a question is ambiguous, and refuses to participate in potentially harmful activities.

LLM training in numbers

The training of GPT-4 illustrates the industrial scale of the process:

- Data volume: estimated at more than 13 trillion tokens (roughly equivalent to about 10 million books)

- Training cost: between 100 and 200 million dollars

- Computing power: more than 25,000 A100 GPUs running for several months

- Energy consumption: equivalent to the annual electricity consumption of roughly 1,000 American households

Pour l’alignement (RLHF) :

- More than 20,000 hours of human work to evaluate responses

- Around 1 million pairs of answers ranked by human reviewers

- A 63 percent reduction in hallucinations compared with non-aligned versions

Sources: OpenAI (2023), Anthropic (2024), studies on the carbon footprint of LLMs (2024)

The three modalities of artificial intelligence

Once this double education is completed, informational saturation followed by behavioral alignment, the model develops a remarkable versatility that manifests itself in three distinct modalities, each revealing a different aspect of emerging artificial intelligence.

Zero-shot learning perhaps reveals the most mysterious aspect of these systems. Without any prior examples, without specific preparation, the model can perform tasks for which it has never been explicitly trained. “Translate this sentence into Mandarin.” “Summarize this research paper on genetics.” “Write a haiku about urban solitude.” “Explain general relativity to a ten-year-old child.” In each case, the model draws upon its general understanding of language and knowledge to produce answers that are often relevant and sometimes remarkable.

It is like an exceptionally gifted student arriving at an exam in a subject they have never formally studied, yet managing to succeed brilliantly thanks to their general culture, analytical ability, and intuition. This capacity for generalization reveals that training based on word prediction develops cognitive abilities far broader and deeper than the apparent simplicity of the original task would suggest.

Few-shot learning pushes this adaptability even further. Show the model a few examples, three formal emails rewritten in an informal tone, four mathematical equations with their solutions, five classical poems adapted into contemporary language, and it immediately grasps the pattern, understands the transformation that is expected, and applies it to new cases with astonishing accuracy.

This modality reveals a particularly unsettling form of intelligence: the ability to learn complex rules from minimal examples. Imagine explaining the rules of bridge with only three hands played, or teaching the subtleties of classical French poetry with four sonnets. That is essentially what modern LLMs are able to do, with an ease that challenges our understanding of learning.

Fine-tuning represents the most intensive form of adaptation, comparable to university specialization after a general education. The model is retrained on a specific task using thousands of labeled examples. This approach produces highly specialized systems: medical models capable of diagnosing rare pathologies, legal assistants that analyze contracts with the precision of a senior lawyer, programming tools able to generate professional code in obscure languages.

When the whole exceeds the sum of its parts

But here comes the most unsettling phenomenon, the one that challenges our understanding and forces us to reconsider our assumptions about the nature of intelligence: emergence.

Beyond a certain threshold of complexity, number of parameters, volume of data, and computing power, unexpected capabilities begin to appear spontaneously, without having been explicitly programmed or taught.

What the creators of GPT-3 discovered surprised them: their system, trained only to predict the next word, had spontaneously begun to perform advanced mathematics. No one had taught it equations, yet it was solving complex problems. No one had instructed it in formal logic, yet it was capable of sophisticated reasoning. No one had given it programming lessons, yet it could write code in dozens of languages.

The most troubling revelation is that these skills were not programmed additions but emergent properties that appeared naturally once the system reached a certain scale. It is as if someone, after learning enough recipes simply by observing chefs at work, suddenly developed a deep understanding of food chemistry, nutrition, culinary art, and even agriculture.

This phenomenon of emergence partly explains why even the creators of these systems are sometimes surprised by their abilities. OpenAI discovered certain capabilities of GPT-4 only after it had been created, by exploring it much like one explores an unknown continent.

Emergence is not merely an increase in power. It is a phenomenon that disorients even those who triggered it. There is something unexpected in the behavior of these models: they develop abilities that no one explicitly taught them. And sometimes it is the engineers themselves who discover, astonished, what their creation has become capable of doing.

- Spontaneous mathematics: in 2020, OpenAI observed that GPT-3, although trained only on text, had begun solving complex differential equations. There was no dedicated module, no integrated course. It was as if, by reading thousands of scientific papers, the model had absorbed a form of mathematical intuition.

- Translation between programming languages: without specific training, GPT-3 can convert Python code into JavaScript and then into SQL. It seems to have identified shared logical structures, as though it sensed a universal grammar of code beyond the languages themselves.

- Self-improvement through dialogue: by asking a simple question such as “How could I improve this prompt?”, developers discovered that GPT-4 could propose strategies they themselves had not considered. The tool becomes a consultant for its own use.

- Creativity under constraint: ask ChatGPT to write a sonnet where each line begins with a different letter of the alphabet and contains exactly seven words. It does it. And the result holds together. Not by accident, but with a poetic rigor that can unsettle experienced writers.

- Emotional intuition: without training in psychology, GPT-4 sometimes detects, within an ordinary sentence, an underlying distress or an implicit emotional tension. It responds with unexpected gentleness, as if something within it could listen beyond the words.

- And sometimes, inexplicable forgetting: perhaps the most unsettling phenomenon is what researchers call the paradox of non-linear emergence. GPT-4 excels at certain logic tests. Then a later version, GPT-4.5, performs worse. No visible change in architecture, no obvious shift in training data. Yet abilities appear and then fade, as if these models possessed their own cognitive weather.

This unpredictability of artificial intelligence, fascinating and unsettling at the same time, reminds us that we may already be creating something that surpasses us.

But how can this mystery be explained? And more importantly, how far can this emergence go? The answer to that question may well determine the future of our species.

Yet the same unpredictability that makes these systems so fascinating raises a troubling paradox. If we do not fully understand how their most remarkable abilities emerge, how can we anticipate their failures? If their creators discover unexpected capabilities only after the fact, what other surprises, less pleasant this time, might await us?

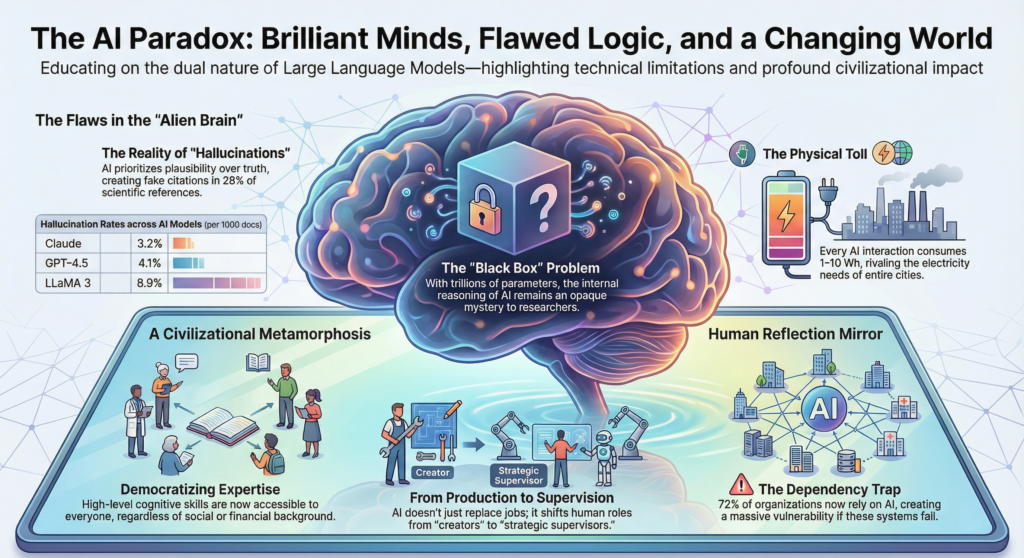

For behind the dazzling spectacle of emergence lie equally mysterious shadow zones: plausible hallucinations, invisible biases, erratic behaviors that no one programmed yet which arise spontaneously from the system’s complexity. Emergence also means the appearance of properties that were neither expected nor desired.

Before projecting ourselves toward such dizzying horizons, it may be wiser to keep our feet on the ground and examine these very real limitations with lucidity. For if their emergent potential fascinates us, their current failures remind us that they remain imperfect creations whose behavior can sometimes be disconcerting.

The fourth part opens a more unsettling breach: what happens when artificial intelligence becomes a mirror with deceptive reflections? Hallucinations, biases, opacity… What you are about to read may well make you question your own certainties.