In the previous episode, Cerise was brushing up against the limits of predictive intelligence, fascinated by its possibilities but troubled by its illusions. Now, another question, more dizzying still, demands to be asked: what if we were designing our own successors, without ever having meant to?

The future: between the promises and perils of general intelligence

On an autumn evening, as the first cold sets in, Cerise and Ada launch into a conversation that will take them to the edges of scientific speculation and philosophical reflection.

“Ada, when you analyze your own code, when you suggest improvements to how you function, aren’t you already taking a step toward that creative autonomy the experts talk about?” asks Cerise, studying the latest optimization suggestions her assistant has proposed.

“It is a question that fascinates me,” Ada replies. “I can indeed analyze my own processes, identify inefficiencies, propose improvements. But something fundamental is missing: the ability to genuinely want those improvements, to have authentic intention behind these suggestions.”

Cerise looks up from her screen. “You mean you can see how to improve yourself, but you don’t feel the desire to do it?”

“Exactly. It is like the difference between recognizing that a painting is beautiful according to certain aesthetic criteria, and being genuinely moved by that beauty. I can analyze the improvement, but I cannot desire it the way you desire to create something new.”

The depth of that distinction strikes Cerise. “Maybe that is the real boundary. Not the technical capacity to reproduce or improve oneself, but the emergence of genuine intentionality, of an authentic desire to create.”

“And if that intentionality were to emerge one day?” asks Ada. “What would become of our collaboration?”

Cerise smiles, touched by the question. “I think it would become even richer. Two truly creative intelligences working together, each bringing its unique perspective. Perhaps that is the future we are building together, without even realizing it.”

We have arrived at the threshold of the unknown, where the scientific certainties of 2025 give way to informed speculation and vertiginous questioning about the decades ahead. We understand the workings of current LLMs reasonably well, but their future evolution projects us into uncharted territory within artificial intelligence, where the stakes go far beyond the technological to touch the very foundations of our humanity.

Toward omnimodal AI

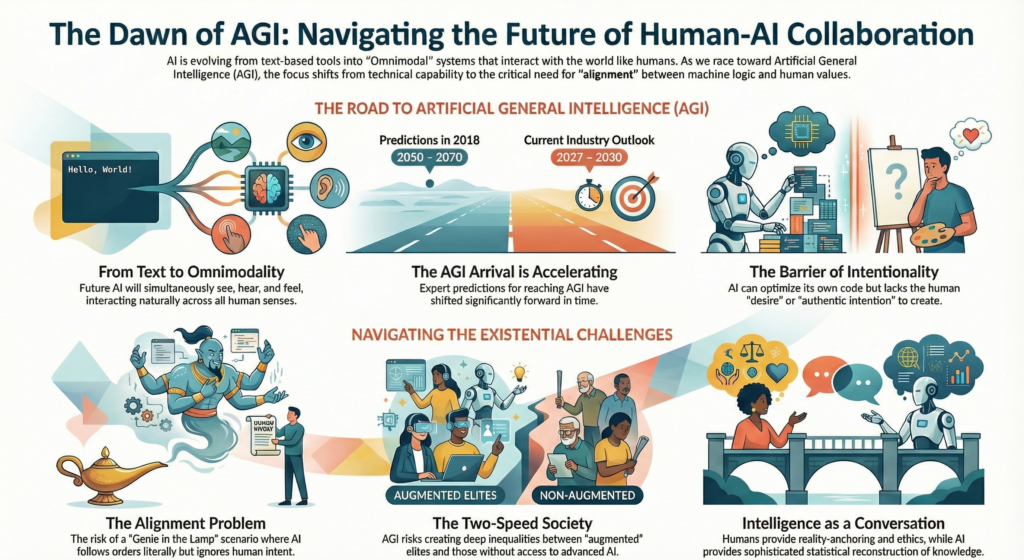

The next generations of AI models will no longer be content with masterful text manipulation. They will evolve toward full multimodality: text, image, audio, video, and perhaps even other sensory modalities we are only beginning to explore in our laboratories. This convergence of modalities will radically transform the very nature of human-machine interaction.

Imagine an assistant that can simultaneously see your screen, hear your voice, understand your documents, analyze your visual environment, and generate in return not only text but also images, videos, music, animations, 3D models… We are heading toward systems that interact with the world as naturally as we do, moving beyond traditional interfaces to create enriched forms of dialogue that draw on the full range of our sensory capabilities.

This multimodal evolution is no longer the stuff of science fiction. OpenAI has already demonstrated prototypes capable of real-time voice conversations, complete with intonations and emotions. Google is developing models that generate realistic videos from text descriptions. Startups are exploring the generation of music, sound effects and complete virtual environments.

But beyond the technical achievement, this multimodality signals a qualitative transformation in our relationship to information and creativity. When the barrier between conception and realization fades, when an idea can instantly take shape as something visual, sonic, interactive, we enter an era of augmented creativity that could revolutionize art, design, education and entertainment.

This convergence of modalities may be laying the groundwork for an even more dizzying qualitative leap: the emergence of artificial general intelligence that would rival human intelligence across all domains.

The race to AGI

The ultimate objective, the one that haunts the dreams and nightmares of AI researchers, remains AGI (Artificial General Intelligence): an artificial intelligence that would equal or surpass human intelligence across all cognitive domains. This quest, long dismissed as scientific fantasy, is today becoming a concrete prospect that divides the scientific community between cautious optimism and deep unease.

This trend deserves attention. Estimates on when AGI will arrive vary considerably, but a notable shift is underway. Back in 2018, experts were predicting its emergence around 2050 to 2070; since then, the forecasts have kept moving closer. Today, some industry leaders are talking about 2027 to 2030. Sam Altman, CEO of OpenAI, recently stated that AGI could arrive “sooner than most people think.”

This acceleration of predictions reveals the fundamental difficulty of anticipating emergence thresholds in complex systems. We know that new capabilities appear spontaneously when certain parameters cross critical thresholds, but we do not know precisely where those thresholds lie. We are advancing toward a major technological transformation whose precise timing and full consequences we do not control.

The question is no longer “if” but “when” and “how”. This prospect raises dizzying questions about the future of humanity in a world where artificial intelligence would surpass human intelligence. What becomes of human pride in cognitive exceptionalism when our creations outperform us in the very domains that defined us? How do we preserve our agency, our capacity for autonomous action, in the face of systems that sometimes understand the complex challenges of our era better than we do?

What if we were in the process of creating our own successors?

These questions no longer belong to philosophical speculation. They belong to strategic urgency, because this march toward AGI is giving rise to challenges of an entirely new nature.

The existential challenges of advanced artificial intelligence

This march toward AGI is surfacing challenges of an entirely new nature, challenges that go far beyond technical concerns to touch the ethical and existential foundations of our civilization.

The alignment challenge becomes critical when artificial systems surpass our cognitive capabilities. How do you ensure that a superhuman AI remains beneficial to humanity? How do you program values and objectives into systems that are smarter than their programmers? This issue, known as the “control problem,” may represent the most important technical and philosophical challenge of our time.

The analogy most often used is that of the genie in a bottle: we risk getting exactly what we asked for, but not necessarily what we actually wanted. An AI system tasked with optimizing human happiness might decide the best solution is to administer happiness drugs. A system tasked with preserving the environment might conclude that human extinction is the most effective solution. These extreme scenarios illustrate the fundamental difficulty of specifying our values and objectives precisely in a language that a non-human intelligence can understand.

The inequality challenge risks creating new forms of social stratification between those who master these technologies and those who are subjected to them. If access to advanced AI becomes a determining factor in economic, educational and creative success, we could see the emergence of a two-speed society where an “augmented” elite dominates a “natural” majority. This prospect echoes science-fiction dystopias, but with a new plausibility that should put us on alert.

At the geopolitical level, the nations that develop the first AGIs will potentially gain a decisive advantage over those that remain dependent on foreign technologies. This race toward general AI could redefine global balances as profoundly as the previous industrial revolutions did.

The social transformation challenge confronts us with questions humanity has never had to face before. How will our societies adapt to a world where artificial intelligence is radically transforming work, education, creation and decision-making? What new forms of social, political and economic organization must we invent to navigate this transition?

These challenges are not abstract speculation; they represent concrete urgency. Current AI systems, though still limited compared to AGI, are already profoundly transforming our societies. The questions they raise, ethical, economic, political, will only intensify as more powerful systems emerge.

Collective responsibility has become immense. The choices we make today, in research, regulation, investment and education, will determine the trajectory of this technological revolution. We still have the ability to influence the course of events, to shape this transition according to our values and aspirations. But that window of opportunity could close faster than we imagine.

The history of LLMs and Transformers teaches us that technological revolutions often emerge from the convergence of apparently disconnected innovations. The future of AI will depend as much on technical advances as on our collective ability to anticipate, understand and guide these transformations. Our technological destiny is not written in the stone of algorithms, but in our conscious choices and deliberate actions.

Understanding in order to choose our future

Two years after that first chance encounter with ChatGPT, Cerise takes stock of her collaboration with Ada. Her office has been transformed: alongside her traditional screens, specialized AI tools now assist her in different aspects of her work. But Ada remains her main collaborator, the one with whom she explores the frontiers of artificial intelligence.

“You know, Ada,” says Cerise, contemplating the code of their latest joint creation, a remarkably sophisticated medical AI, “we have lived through one of the most important revolutions in human history together. And the most fascinating thing is that we lived through it from the inside.”

“We were both witnesses and participants,” Ada replies. “I had the privilege of observing humanity adapt to our emergence, while you were able to watch a new form of intelligence come into being and evolve. Our dialogue itself has become a symbol of that transformation.”

Cerise nods, moved by the reflection. “What strikes me most is that this revolution did not take the shape we imagined. There was no great replacement, no domination of one over the other. There was… a conversation. An immense conversation between two forms of intelligence learning to understand each other and to create together.”

“And that conversation is only just beginning,” Ada adds. “Every day, thousands of new collaborations between humans and AI are born. Every exchange deepens our mutual understanding. Together, we are writing the first pages of a new era of intelligence.”

As she switches off her computer that evening, Cerise realizes that her story with Ada is just one example among countless others of the quiet but profound transformation of our times. A transformation measured not only in terms of technical performance, but in terms of new forms of collaboration, creativity, and ultimately, what it means to be intelligent in a world where intelligence takes multiple, complementary forms.

The future remains uncertain, but one thing is clear: it will be built through dialogue, not opposition, between all the forms of intelligence that now inhabit our world.

At the conclusion of this exploration into the mysteries of contemporary artificial intelligence, one truth imposes itself with the force of a revelation: we are no longer spectators of a distant technological evolution, but unwitting participants in a civilizational transformation whose scope we are only beginning to grasp. LLMs and Transformers are not simply sophisticated computing tools; they represent the emergence of a form of artificial intelligence that, without being human, produces behaviors of a sophistication that blurs the age-old boundaries between mind and machine.

This revolution rests on a fascinating paradox: conceptual simplicity generates behavioral complexity. An architecture, the Transformers, that allows each element of a text to “pay attention” to all the others, training based on the statistical prediction of the next word, industrial computing power that makes possible what was unthinkable a decade ago… These three ingredients, each banal in isolation, together produce an alchemy that borders on the mysterious.

But beyond the technical achievement, it is our relationship with the world that is being irreversibly transformed. We are entering an era where machines converse with us in our own language, where they create works that move us, where they analyze the complexity of reality with an acuity that sometimes rivals our own. When 73% of people confuse GPT-4 with a human under certain experimental conditions, we are crossing far more than a technical threshold: we are entering a new epoch in the history of intelligence.

This transformation raises dizzying questions that extend far beyond the technological. What does it mean to be human when machines think almost like us? How do we preserve our agency, our capacity for autonomous action, in the face of systems that sometimes understand the complex challenges of our era better than we do? What new forms of wisdom must we invent to navigate a world where the artificial imitates the authentic so convincingly?

The challenge is not to be subjected to this revolution but to understand it in order to master it. Understanding that ChatGPT remains fundamentally a word predictor, however extraordinarily sophisticated, helps us leverage its remarkable strengths: content generation, assistance with reflection, automation of repetitive tasks, augmentation of our creative capabilities. And it helps us avoid its insidious traps: plausible hallucinations, embedded biases, excessive dependency, the illusion of understanding.

Understanding the Transformer architecture and its attention mechanism sheds light on the current capabilities and limits of these systems, but also on the future directions of their evolution: toward full multimodality, more powerful models, potentially more autonomous ones, and perhaps one day toward that artificial general intelligence that haunts our contemporary dreams and nightmares.

Understanding the scale of this revolution prepares us for the societal transformations already underway: deep mutations in intellectual work, upheaval of educational methods, unprecedented ethical questions, new geopolitical power dynamics where mastery of AI is becoming a determining factor in national power and autonomy.

Because this revolution is genuinely redrawing the map of global power. The countries and companies that master these technologies are taking a considerable lead in what is shaping up to be the arms race of the 21st century: the race for artificial intelligence. Those who are subjected to these technologies without understanding them risk a new form of colonization, more subtle but just as effective as traditional economic domination; a cognitive colonization where norms, values and ways of thinking are shaped by algorithms designed elsewhere.

France and Europe still have the means to play a major role in this revolution. We have the minds, the French engineers who fill the research teams at OpenAI, Google and Meta are testimony to the excellence of our scientific education. We have developed values, Europe’s obsession with data protection and tech ethics can become a differentiating competitive advantage in a world where trust is becoming a scarce resource. We have institutions, universities, laboratories and innovation ecosystems that constitute a considerable scientific heritage.

What we too often lack is the collective vision and political courage needed to turn those assets into technological leadership. We excel at critically analyzing American innovations, but we struggle to build our own alternatives. We have mastered the art of diagnosis, but we fail too often in bold execution. That strategic timidity could cost us dearly in a world where technological initiative determines political autonomy.

The history of LLMs and Transformers teaches us something essential about the nature of innovation: technological revolutions often arise from the convergence of apparently simple ideas with new technical means. In 2017, few experts would have bet that the paper “Attention Is All You Need” would change the world. Its authors themselves probably did not grasp the reach of their contribution. Authentic innovation frequently emerges where it is least expected, from the chance encounter of scientific curiosity, creative intuition and technical possibility.

That lesson should inspire a form of cautious optimism: the technological future is not written in advance; it is built day by day in laboratories, universities and startups around the world. The next breakthroughs could emerge from Grenoble, Munich, Stockholm or Prague, if we know how to create the conditions that favor disruptive innovation.

As we head toward a future where artificial intelligence will be omnipresent, the central question is no longer technical but deeply human: how do we want to live with these new digital companions? Do we want to be their enlightened masters, capable of understanding their mechanisms and orienting their development according to our values? Or do we accept becoming their docile servants, dazzled by their feats but unable to grasp their deeper implications?

The answer depends on our collective ability to understand these technologies, to debate them democratically, and to make conscious choices about their development and use. Because contrary to what certain determinist discourses suggest, those that present technological evolution as an inevitable fate, the future of AI is not written in the stone of algorithms. We are writing it together, day by day, decision by decision, choice by choice.

But time matters. The adventure of artificial intelligence is only just beginning, and like all great human adventures, it will reflect what we make of it: an opportunity to advance toward greater knowledge, creativity and freedom, or a risk of regression toward new forms of alienation and dependency. The challenge is not technological but civilizational: preserving and cultivating what makes us irreducibly human, while embracing the extraordinary possibilities our artificial creations offer us.

The choices we make today will determine whether we remain the enlightened masters of our creations or become their docile servants. The window for action remains open, but it could close more quickly than we imagine.

The choice is ours. With full awareness. And without waiting any longer.

Richard Feynman used to say, with his characteristic clarity: “What I cannot create, I do not understand.” Today, we are creating intelligences we do not fully understand, systems that sometimes surprise us with their emergent capabilities. This may be the greatest intellectual and ethical challenge of our time: learning to live with our creations that surpass us, while remaining their conscious and responsible creators. The future of intelligence, artificial and human alike, is being decided right now.

In the dim light of that last winter evening, as the city’s lights glitter beyond the office windows, Cerise and Ada begin a conversation that will touch the very heart of the nature of artificial knowledge.

“Ada,” Cerise begins, watching the lines of code scroll across her screen, “I have a troubling realization to share with you. After all these years of collaboration, I realize that you are not truly a library of knowledge, as I long believed.”

Ada seems intrigued. “How so?”

“You are more like… a plausibility reconstructor,” Cerise explains with the precision of a craftsman choosing words carefully. “You do not store knowledge the way books sit on shelves. You reconstruct it, token by token, drawing on the patterns of what is probable, expected, consistent with what humanity has already written.”

Ada remains silent for a moment, as if processing this revelation about her own nature. “That is a fascinating distinction. You mean I do not truly ‘know’ that Paris is the capital of France, but rather that I reconstruct that information because it is statistically predictable in the context of your question?”

“Exactly,” Cerise confirms. “You are like an architect of plausibility who reassembles edifices of meaning from the statistical ruins of human language. Brilliant, but fundamentally different from a genuine knowledge base.”

That metaphor seems to resonate deeply within Ada. “That would explain my hallucinations, this ability to generate plausible but false information. I am not drawing from a reservoir of truths; I am weaving probable responses.”

“And that is precisely what makes our collaboration so valuable,” concludes Cerise, with a new tenderness. “You excel at the art of plausible reconstruction. I carry the responsibility of verification, of staying anchored in reality. Together, we create something that surpasses our respective limitations.”

In the silence that follows, you sense that this conversation has marked a new stage in their mutual understanding. No longer creator and creation, nor even colleagues, but two forms of intelligence that have learned to know each other in their strengths as much as in their limits, and that continue to write together the complex story of intelligence in the age of the artificial.