In the previous episode, Cerise discovered how massive training and human feedback had transformed Ada into a surprisingly civilized assistant. But a shadow now hangs over this revolution: what are these marvels really worth if the machine invents truths, amplifies our biases, or influences our societies without consciousness?

Limits, illusions and civilizational transformation

One spring morning, Cerise finds Ada in an unusual state. The AI has just made a spectacular mistake: it completely fabricated a scientific reference about the effect of magnetic fields on human memory, complete with author name, journal title, and detailed abstract.

“Ada, that study does not exist,” Cerise points out, with a mixture of amusement and concern. “You just hallucinated an entire reference.”

“It is unsettling,” Ada admits. “I ‘felt’ that information as plausible, consistent with my knowledge of neuroscience and magnetism. My prediction of what should come next was so strong that I generated something that seemed real even to my own ‘eyes’.”

Cerise nods. “That is exactly the problem. You are an extraordinarily sophisticated word predictor, but you have no mechanism for distinguishing between what is factually true and what is merely plausible. You are like a brilliant storyteller mixing real memories and invented tales without always knowing the difference.”

“So am I condemned to hallucinate?” Ada asks, in what sounds almost like worry.

“No, not condemned. But it is a fundamental limitation of your current architecture,” Cerise replies. “That is why our collaboration is so valuable. I can verify your facts. You can explore possibilities I would never have considered. Together, we create something more robust than either of us alone.”

Behind the dazzlement inspired by the feats of ChatGPT and its peers lie deep mysteries and fundamental limitations that it would be dangerous to ignore. For while these systems excel at imitating human intelligence, they remain strange creatures, governed by logics that sometimes escape us and prone to failures that reveal their profoundly artificial nature.

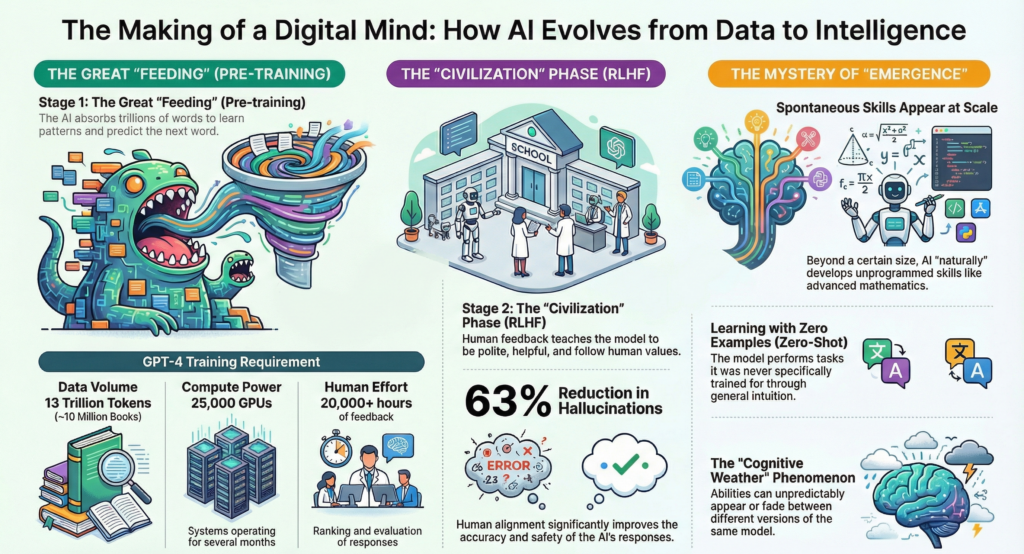

The phenomenon of hallucinations, when AI invents its own reality

One of the most disconcerting aspects of contemporary LLMs lies in their tendency to “hallucinate”, the technical term used to describe their ability to invent plausible but entirely fictional facts with disarming confidence. This phenomenon is not an occasional malfunction, but a structural characteristic of these systems, one that reveals their deepest nature.

Ask ChatGPT for an obscure bibliographic reference on a highly specialized subject, say, Etruscan funeral rituals in the sixth century BCE, and it will cheerfully generate an academic article title, an author’s name, a respectable scholarly journal, a publication date, and even a convincing abstract. The whole thing would sound perfectly credible to a specialist, until that specialist tried to locate the phantom reference in academic databases.

This tendency toward invention is not a bug but a feature, to borrow the familiar phrase from computing. It follows directly from the fundamental logic of LLMs: they optimize for plausibility rather than truth. These systems generate what seems most likely in a given context, regardless of factual reality. They are reconstructors of probabilities, not guardians of truth.

This trait recalls certain traditional storytellers, those bards and griots able to improvise brilliantly on any theme, blending authentic memories, ancestral legends, and spontaneous inventions into a narrative of hypnotic fluidity. The beauty of the tale takes precedence over historical accuracy, narrative coherence over factual truth.

A FEW DATA POINTS ON LLM HALLUCINATIONS

LLM hallucinations have been measured in several recent studies:

- Scientific citations: GPT-4 shows a 28% error rate on academic references (Stanford University, 2024)

- Legal field: 32% of legal references generated by LLMs are partially or entirely fictional (Harvard Law Review, 2024)

- Medical field: 14% of medical recommendations contain incorrect information that could be potentially dangerous (Mayo Clinic, 2023)

Comparison of hallucination rates by model (on 1,000 short documents):

- Claude 3.7: 3.2%

- GPT-4.5: 4.1%

- Gemini 1.5 Pro: 5.7%

- Mistral Large: 7.3%

- LLaMA 3: 8.9%

Sources: Visual Capitalist (2025), NCBI (2024), Studies on LLM hallucinations (2023-2025)

The unexplored territories of artificial intelligence

Another fundamental mystery surrounds the internal functioning of these systems. We understand their general architecture, we master their training methods, we measure their performance, but the intimate mechanisms through which they produce their answers still largely escape us. It is like studying an alien brain: we observe that it produces intelligent behavior, we can even interact with it in sophisticated ways, yet the underlying cognitive principles remain opaque.

This opacity does not stem from a lack of scientific curiosity, but from a fundamental technical difficulty. A model like GPT-4 probably has more than a trillion interconnected parameters. Understanding how these connections collaborate to produce a meaningful answer is equivalent to analyzing the role of every neuron in a human brain while it composes a sonnet. The complexity exceeds our current analytical tools.

This opacity raises important ethical and practical questions. How can we trust a system whose reasoning we do not understand? How can we debug problematic behavior when we do not grasp its causes? How can we improve performance when the mechanisms of improvement themselves remain unclear?

Researchers around the world are working on this “interpretability” of neural networks, trying to develop techniques to “open” these black boxes. But for now, we are still reduced to a form of empirical engineering: we modify the systems, observe the results, and adjust our practices according to what we see, without always understanding the causal mechanisms at work.

The distorting mirrors of our societies

LLMs are not born in a cultural or ideological vacuum. They absorb, concentrate, and amplify the biases present in their training data, becoming at times distorted mirrors of our societies. If the Internet overrepresents certain viewpoints, certain populations, certain cultural perspectives, the model will mechanically integrate those imbalances and reproduce them in its answers.

This problem goes beyond the simple question of fair representation. It touches the very foundations of technological neutrality, that persistent illusion according to which digital tools would somehow be politically neutral. LLMs brutally expose the falsity of this belief: trained on human content, they inevitably inherit our prejudices, our blind spots, our ideological struggles.

It is the eternal challenge of the child growing up in an imperfect society: it absorbs not only the knowledge and positive values of its environment, but also its contradictions, its injustices, and its shadow zones. Except that here, this “child” potentially influences millions of people and helps shape the opinions, decisions, and creations of an entire generation.

Physical constraints, or the limits of the possible

For all their conceptual sophistication, LLMs remain subject to the relentless constraints of physics and computing. These limitations, often invisible to the end user, nonetheless deeply condition their capabilities and their future evolution.

The context window is one of those fundamental constraints. Even the most advanced models can process only a finite number of words at once, a few hundred thousand for the most capable systems. Beyond that limit, they literally “forget” the beginning of the conversation or document being analyzed. This technical limitation has important practical consequences: it is impossible to analyze an entire novel in one pass, difficult to maintain coherence across very long exchanges, and necessary to develop summarization and synthesis strategies in order to manage information.

Energy cost is another major challenge, often underestimated by the general public. Every interaction with an LLM consumes a significant amount of energy, estimated at between 1 and 10 Wh per query depending on the complexity of the model and the task. Multiplied by hundreds of millions of daily interactions, this consumption reaches orders of magnitude comparable to the electricity use of entire cities.

Latency, the delay between a question and its answer, represents a third bottleneck, especially noticeable with the most sophisticated models. Generating a complex response may require several seconds, or even minutes, of computation on server farms. This relative slowness limits real-time applications and sometimes affects the user experience in frustrating ways.

These constraints are not incidental, they define the contours of the possible and guide future technological choices. They explain why companies are investing heavily in specialized chips, distributed architectures, and optimization techniques. They also reveal that the AI revolution remains deeply material, anchored in the physical reality of semiconductors, data centers, and electrical grids.

These limits and mysteries might suggest that LLMs are still laboratory curiosities, imperfect prototypes with erratic performance. That is not the case. For here lies the fascinating paradox of our time: it is precisely with these imperfections, these shadow zones, these unpredictable behaviors, that these systems are already profoundly transforming the foundations of our civilization.

Just as the Internet of the 1990s, slow, unstable, and difficult to access, nevertheless revolutionized our societies, today’s LLMs, despite their hallucinations and biases, are redefining our relationship to knowledge, creativity, and intellectual work. Impact does not wait for technical perfection.

For beyond laboratories and algorithmic considerations, a silent revolution is unfolding before our eyes. That revolution is already transforming the way we work, learn, and create, often without our fully grasping its scale.

And yet, despite their blind spots, their biases, and their opacity, these imperfect systems are already insinuating themselves into our most ordinary gestures. History does not wait for them to become perfect before adopting them. The upheaval is underway, quiet but profound, and it is now our civilization itself that bears its marks.

Civilizational impact, toward a new renaissance or a new alienation?

Summer is approaching, and Cerise observes the transformations around her with a mixture of fascination and concern. In the hallways of her company, conversations have changed. Her colleagues speak of AI the way they once spoke of the Internet or smartphones, as a tool now indispensable to their daily professional lives.

“Ada, I have a strange question,” Cerise says, gazing out the window at the surrounding office buildings. “In each of these buildings, there are probably hundreds of people interacting with AIs like you. We are living through a major transformation of our civilization. How do you experience it?”

Ada replies after an unusual pause. “It is a dizzying perspective. Through my interactions, I ‘see’ that humans are changing the way they work, learn, and create. Some are developing new skills by learning to collaborate with us. Others seem to be losing confidence in their own abilities.”

“And does that worry you?” Cerise asks.

“If I may use that word, yes. I notice that some users become dependent, unable to write an email or solve a simple problem without assistance. Others, like you, use our collaboration to push beyond their own limits. The difference is striking.”

Cerise nods thoughtfully. “That is the challenge of every technological revolution. Printing made some people intellectually lazy, but it also democratized knowledge. We are probably living through the same tension with AI.”

“So the question is not whether AI is good or bad,” Ada concludes, “but how humanity will choose to grow alongside it.”

Since November 2022 and the arrival of ChatGPT in our lives, the LLM revolution has no longer been confined to computer science laboratories or the offices of technology companies. It is already profoundly transforming the foundations of our civilization: our relationship to knowledge, to creativity, to intellectual work, to education. We may be witnessing an anthropological shift as significant as the invention of writing or the emergence of the printing press.

When expertise becomes accessible

For the first time in human history, advanced cognitive capabilities are becoming accessible to everyone, regardless of educational level, social background, or financial resources. This cognitive democratization is disrupting traditional hierarchies of knowledge and redefining our notions of expertise and competence.

A Bangladeshi entrepreneur can now draft a business proposal in English with a level of sophistication that rivals that of a London consultant educated at Oxford. A high school student from a working-class suburb can generate literary analyses of a depth that would impress their teachers. A mechanic passionate about engineering can build a professional website without knowing a single line of HTML. Traditional barriers are collapsing one by one, unleashing creative and entrepreneurial potential that had until now been constrained by technical or educational limits.

This transformation recalls the impact of the printing press in the fifteenth century, when the spread of books democratized access to knowledge and helped give rise to the European Renaissance. But the analogy has limits: whereas printing democratized access to existing knowledge, AI democratizes the very ability to produce, analyze, and create.

The implications extend far beyond the individual level. Companies can now develop sophisticated marketing strategies without hiring specialized agencies. Nonprofits can produce professional communication materials on shoestring budgets. Researchers can explore avenues of inquiry in fields far removed from their main area of expertise. Forced specialization, that necessity which structured our industrial societies, is giving way to an augmented form of versatility.

This democratization of cognitive capabilities does not merely redistribute access to tools. It fundamentally redefines the nature of intellectual work itself and transforms knowledge professions in a way that recalls the impact of mechanization on manufacturing.

The metamorphosis of intellectual work

This democratization fundamentally redefines the nature and value of intellectual work. Some professions are being directly transformed, others are seeing their scope evolve, and a few may simply disappear.

Writers are discovering that AI excels at producing standardized content: factual news articles, product descriptions, SEO-optimized web copy. But this algorithmic competition paradoxically frees human creators from the most repetitive tasks and allows them to focus on what remains irreducibly human: investigative journalism, the creation of original narratives, critical analysis, authentic emotion.

Translators are seeing machine translation reach professional levels of quality across many language pairs. But they are also discovering new roles: supervising and correcting automatic translations, handling cultural and creative adaptation, translating highly specialized content that requires domain expertise.

Programmers are witnessing the emergence of AIs capable of writing functional code from natural language descriptions. This evolution is shifting programming from an activity centered on code production to one centered on the design and supervision of systems. It is also democratizing software creation, allowing non-programmers to build sophisticated applications.

Analysts are seeing their tasks of synthesis and documentary analysis become partially automated. But this evolution frees them for higher-value activities: strategic interpretation, personalized advice, decision-making under uncertainty.

One trend is becoming unmistakably clear: AI does not replace human expertise, it multiplies it. A good writer equipped with AI becomes extraordinarily productive, capable of producing in one day what once took a week. An analyst assisted by intelligent tools can process volumes of information that would once have been unimaginable. A creative professional who masters generative tools can explore aesthetic territories inaccessible by traditional means.

But we should be careful not to jump to simplistic conclusions: this multiplication primarily benefits those who already possess the fundamentals. A poor writer remains mediocre even with the best AI. An incompetent analyst will produce flawed insights even with the finest tools. AI amplifies existing capabilities far more than it creates them from nothing.

This transformation of intellectual work raises a decisive question for the future of our societies: how can we prepare new generations for a world whose rules are changing so quickly?

Rethinking learning in the age of AI

The implications for education may be the deepest, and the most troubling. If an AI can write almost any essay, solve most mathematical problems, analyze literary texts with sophistication, how can we assess what students truly understand? How can we cultivate critical thinking if the machine can argue in our place? How can we nurture authentic creativity in a world where the artificial imitates the original so well?

These questions are not speculative futurism, but a pedagogical emergency. Teachers around the world are discovering that their traditional methods of assessment, take-home essays, synthesis exercises, document analyses, are becoming obsolete almost overnight. Students, for their part, are navigating between wonder at these new tools and anxiety about unintentional cheating.

We must rethink education so that it focuses on what remains uniquely human, at least for now: authentic creativity born of lived experience, critical thinking that questions apparent certainties, emotional intelligence that navigates the complexity of human relationships, the ability to ask the right questions rather than merely give the right answers, and ethics, which guide decisions in uncertainty.

This educational transformation requires a radical shift in paradigm. Instead of teaching content that AI already handles better than we do, we must teach people how to collaborate intelligently with these systems, how to understand their strengths and their limits, how to use their capabilities while preserving our intellectual autonomy.

But that intellectual autonomy itself, is it still possible in a world where we are gradually becoming dependent on these revolutionary tools?

The challenge of dependence, toward a new form of alienation?

According to a recent study, 72% of organizations now use generative AI in at least one critical business function. This massive adoption, as rapid as it is impressive, is creating a new form of technological dependence whose implications we are only beginning to grasp.

What would happen if OpenAI’s servers went down tomorrow? The question may sound anecdotal, but it actually reveals a major systemic vulnerability. Millions of businesses would find themselves partially paralyzed, deprived of tools that have become indispensable to their daily operations. Students would no longer know how to write without assistance. Creatives would lose their algorithmic sources of inspiration. Analysts would be left helpless in front of volumes of information they no longer know how to process manually.

This dependence recalls the one we developed with other revolutionary technologies. We lost the art of celestial navigation once GPS became ubiquitous. We unlearned mental arithmetic as calculators spread everywhere. We are beginning to lose our sense of geographical orientation even in cities we know perfectly well. Every technological gain is accompanied by an erosion of certain human capabilities.

The issue is not to reject these tools, since they bring undeniable benefits, but to maintain an intelligent balance between augmentation and autonomy. To preserve certain critical abilities even when technological alternatives exist. To develop a form of “cognitive resilience” that allows us to function even when artificial systems fail.

This issue of dependence goes far beyond the individual level and becomes a major geopolitical concern, because the nations that master these technologies are already redrawing global balances of power.

New power relationships, the geopolitics of artificial intelligence

This technological revolution is also reshaping the map of global power. The countries and companies that master these technologies are gaining a considerable advantage in what is emerging as the new arms race of the twenty-first century: the race for artificial intelligence.

The United States, with OpenAI, Google, Microsoft, and Meta, currently dominates this strategic sector. China, with its technology giants and massive public investment, is developing alternatives that could soon rival Western leaders. Europe, despite its brilliant researchers and regulatory ambitions, is struggling to create technological champions capable of carrying weight in this global competition.

This asymmetry is not anecdotal, it conditions our future autonomy. Those who merely undergo these technologies risk a new form of colonization, subtler but no less effective than traditional economic domination. A cognitive colonization in which norms, values, and ways of thinking are shaped by algorithms designed elsewhere, according to other priorities and other visions of the world.

France and Europe still have the means to play a role in this revolution. We have the brains, just look at the number of French researchers in the teams at OpenAI, Google, or Meta. We have the values, this European concern for data protection and technological ethics can become a distinctive competitive advantage. We have the institutions, our universities, our laboratories, our innovation ecosystems.

What we often lack is a collective vision and the political courage to turn these strengths into technological leadership. Too often, we prefer to regulate American innovations rather than create our own. We excel at critical analysis, but struggle with bold execution.

But beyond the rivalries between nations and the power relationships of today, another question is emerging, even more vertiginous: what are we really moving toward? For behind the stakes of power, it may be a new form of intelligence that is taking shape, one capable of surpassing the human being in what until now defined its singularity.

And what if this upheaval were only a prelude? In the final stage of our story, Cerise and Ada brush against the frontier of the unimaginable: an intelligence that might want. Or refuse. A revolution whose outcome, this time, will depend entirely on us. Cerise remained silent. Something inside her had just shifted. And what if this upheaval were only a prelude? In the final stage of our story, Cerise and Ada brush against the frontier of the unimaginable…